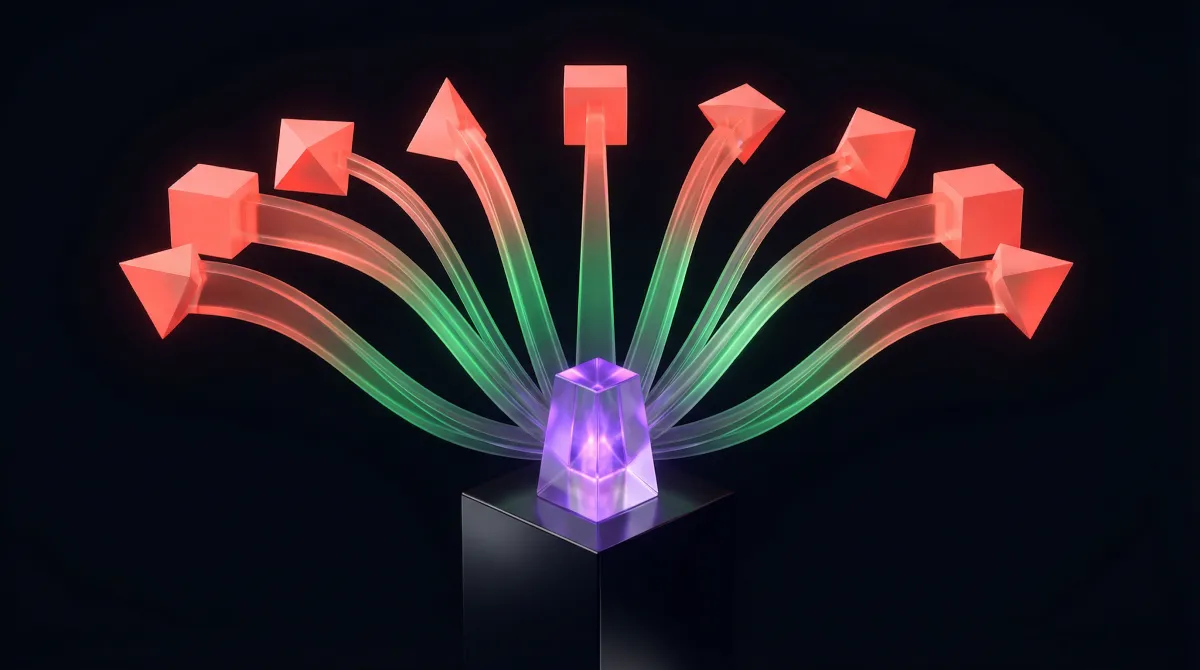

AI citation sources are the off-site platforms AI systems pull from, trust, or use to validate recommendations. If you want to understand why one business gets cited in ChatGPT, Perplexity, or Google AI results and another does not, this is the map: Google Business Profile, reviews, directories, local editorial coverage, YouTube, Reddit, LinkedIn, Quora, and the vertical reputation layers underneath them.

The practical takeaway is sharper than most GEO advice: AI citation sources are not just Reddit plus YouTube. For local and service businesses, the real center of gravity is usually Google Business Profile, reviews, vertical directories, local editorial trust, and credential consistency. Community platforms still matter, but they are usually a supporting layer, not the whole model.

This page is built as a research-backed source map for off-site citation strategy. It synthesizes a March 27, 2026 research pass across 15 off-site surface types and 3 vertical stacks, then translates that into an action order you can actually use. If you want the broader framework behind this, start with how to get cited by AI. If you want the underlying KPI, see what AI visibility means.

Bottom line: if you want to influence AI citations for real commercial queries, build an off-site trust stack, not a random posting calendar.

Reading note: the strongest claims below now link to source material in context, and the final sources section keeps the full reference stack.

What Are the Best Off-Site AI Citation Strategies?

If you only remember one thing from this page, remember the order. Most brands waste time on scattered posting when the bigger gains come from fixing the third-party sources AI systems already trust.

- Own your entity layer first. Claim and complete Google Business Profile, then clean up Apple Maps and Bing Places. If the entity layer is weak, everything else compounds badly.

- Prioritize reviews and vertical directories before generic social. For local commercial and regulated categories, reviews, specialist directories, and credential sources usually influence AI citations more than casual posting.

- Earn corroboration, not just mentions. Local editorial coverage, best-of listicles, association pages, and licensing or board-certification profiles create independent support that AI systems can trust.

- Use YouTube as your scalable proof layer. Clear expert-led videos, transcripts, and query-matching titles make YouTube one of the strongest universal citation-source plays.

- Use Reddit and LinkedIn selectively. They are powerful where community consensus or professional authority matters, but they should support the trust stack, not replace it.

- Build by vertical, not by platform checklist. Plastic surgeons, PI lawyers, and cosmetic dentists do not need the same third-party stack. The right move is almost always vertical-specific.

That is the difference between a real citation strategy and an off-site content treadmill. If you need the broader search-wide playbook beyond citations, pair this with how to appear in AI search, how to show up in AI Overviews, and our complete AI SEO guide.

The Off-Site AI Citation Stack

For local and service businesses, the highest-leverage off-site stack usually looks like this:

- Google Business Profile / Google Maps

- One or more strong review or vertical directory surfaces

- Local editorial mentions, best-of listicles, and digital PR

- Credential, licensing, and association sources

- YouTube

- Selective community and professional platforms such as Reddit, LinkedIn, and Quora

That order matters. Generic community platforms influence broad discovery and comparison prompts. Maps, reviews, directories, and credential layers dominate much more often in local recommendation and practitioner-selection prompts, which means they often shape the AI citation source set more than marketers expect.

How to Audit AI Citation Sources

You do not need to guess where to start. A useful citation-source audit is just a comparison between the sources you already control, the sources your competitors own, and the sources AI systems are most likely to trust in your category.

- List the core source types for your category. Start with maps, reviews, vertical directories, credential sources, editorial mentions, YouTube, and selective community platforms.

- Score your current footprint. Mark every profile or source as claimed, complete, active, reviewed, cited, or missing.

- Check the top competitors by prompt, not just by Google rank. Look at the businesses that keep appearing in recommendation answers and compare their off-site stack to yours.

- Fix the entity and review layers first. In most local markets, incomplete profiles and weak review signals are easier to improve and more important than speculative community plays.

- Add editorial and credential proof second. Once the core profile layer is stable, work outward into listicles, local press, associations, board certification, and licensing consistency.

- Use YouTube, Reddit, LinkedIn, and Quora as amplification. These work best when they reinforce an already credible source stack.

If you already track AI visibility, think of this as the off-site diagnostic layer underneath the metric. It explains why one brand is getting cited and another is being ignored.

How Should You Prioritize Off-Site Surfaces?

| Surface | Main AI Role | Best Fit | Priority |

|---|---|---|---|

| Google Business Profile & Google Maps | Local entity grounding, reviews, place discovery | Nearly every local/service brand | Must-have |

| Yelp | Review proof and local comparison | Dentists, home services, local commercial categories | Must-have where category fits |

| Local news, digital PR, best-of listicles | Third-party recommendation and editorial trust | Local recommendation prompts | Must-have |

| Associations, certifications, licensing directories | Credential validation and expert trust | Medical, legal, regulated experts | Must-have in regulated verticals |

| YouTube | Demonstration, explanation, high-frequency citation source | Cross-vertical, especially procedure and expert education | High |

| Healthgrades | Provider comparison, reviews, affiliation proof | Physician-led medical practices | Must-have for physician-led medical |

| RealSelf | Cosmetic procedure authority, before/after proof | Plastic surgery and aesthetics | Must-have for aesthetics |

| Zocdoc | Booking intent and appointment discovery | Dentists and clinics | High / selective |

| Avvo, Super Lawyers, Justia, FindLaw | Legal trust, awards, profiles, reviews | PI and consumer legal | Must-have for legal |

| Community validation and comparisons | Broad discovery and debated categories | Selective high | |

| Professional authority and entity reinforcement | B2B, expert-led, founder-led brands | Selective high | |

| Apple Business Connect & Apple Maps | Entity consistency and Apple ecosystem discovery | Local brands with mobile-heavy audiences | Hygiene to high |

| Bing Places | Bing and Copilot local visibility | Local brands where Bing/Copilot matters | Hygiene to high |

| Quora | Question-led citations and pain-point capture | Informational categories | Selective |

| Wikipedia & Wikidata | Reference and entity graph authority | Notable brands, people, and topics | Opportunistic |

Surface-by-Surface Breakdown

1. Google Business Profile & Google Maps

This is the single most important off-site local surface. Google’s AI search guidance explicitly tells businesses to keep Business Profile information current, and Google’s business-details documentation shows how those fields help it understand local entities. In AI systems, GBP often acts less like a classic citation source and more like an entity-resolution and grounding layer for local recommendations.

- Why it matters: category selection, reviews, photos, hours, Q&A, location consistency, and service attributes all feed local trust.

- What matters most: primary and secondary categories, exact business name, review volume and recency, photo freshness, holiday hours, services, and practitioner-location association.

- Agency stance: audit it monthly, treat it as infrastructure, and never promise AI Overview placement from GBP edits alone.

2. Apple Business Connect & Apple Maps

Apple’s local stack looks more like entity hygiene plus secondary discovery than a primary AI citation lever, but it is more important than many SEO teams assume. Apple Business Connect and Apple’s support documentation position it as the control layer for how brands appear across Maps and related Apple surfaces.

- Best role: consistency across Maps, Siri, and Apple ecosystem surfaces.

- What matters most: claimed place card, accurate description, hours, contact details, photos, and action links for scheduling or ordering.

- Practical read: worthwhile for local brands, especially mobile-heavy ones, but weaker than Google as a direct AI visibility source.

3. Bing Places

Bing Places matters more now that Microsoft is tying local business data more directly into Bing Maps and Copilot. Microsoft’s October 2025 relaunch announcement explicitly framed deeper Bing Maps and Copilot integration as part of the product direction.

- Best role: Bing and Copilot local discovery, plus cross-ecosystem entity coverage.

- What matters most: claimed profile, accurate hours, address, phone, categories, photos, and clean imports from Google.

- Practical read: still secondary to GBP, but no longer trivial if Copilot or Bing matters to the market.

4. Yelp

Yelp remains one of the strongest general-purpose review and comparison surfaces for local businesses. It is especially relevant when the prompt has a best, top, near me, or reviews flavor. This section is partly an inference from local recommendation behavior and broader AI citation studies rather than a single Yelp-specific citation benchmark.

- Best role: review proof and comparative local trust.

- What matters most: star rating, review recency, review volume, categories, photos, listing completeness, and response hygiene.

- Best fit: dentists and many consumer local categories; less central than legal directories for PI law.

5. Local News, Digital PR, and Best-of Listicles

This is one of the most underrated off-site levers. Editorial mentions and city-specific listicles give AI systems a way to justify recommendations with independent framing.

- Best role: third-party corroboration for “best X in city” queries.

- What works: local news, city guides, lifestyle magazines, community awards, expert commentary, and original local data stories.

- Agency stance: build target lists by city and vertical, and do not treat low-trust paid placements as interchangeable with real editorial credibility.

- Mini-playbook: build a city-by-city list of publishers, identify the “best of” and expert-roundup formats they already run, then pitch proof-backed angles rather than generic brand stories.

6. Industry Associations, Board Certifications, and Licensing Directories

For regulated categories, credential sources often matter more than social platforms. These databases help AI systems resolve whether the practitioner is real, licensed, specialized, and credible.

- Best role: expert legitimacy and entity verification.

- What matters most: exact legal name, specialty or practice area, board certification, license status, education, affiliations, and location consistency.

- Best fit: critical for plastic surgeons and PI lawyers, high priority for cosmetic dentists.

7. Healthgrades

Healthgrades is a core medical-directory surface because it combines provider profiles, qualifications, affiliations, patient reviews, and operational discovery signals. Its own methodology pages, patient review documentation, and Zocdoc booking partnership announcement make that role fairly explicit.

- Best role: provider comparison, affiliation proof, and medical trust.

- What matters most: specialties, procedures treated, affiliations, reviews, accepted insurance, appointment pathways, and profile completeness.

- Best fit: high for plastic surgeons, medium-high where cosmetic dentistry overlaps with physician-led treatment, irrelevant for PI law.

8. RealSelf

RealSelf is one of the strongest vertical platforms in aesthetics because it combines doctor profiles, reviews, Q&A, and before/after proof in a cosmetic-specific environment. RealSelf’s doctor FAQs and recognition criteria show how it ties doctor identity, specialty alignment, and activity to trust signals on the platform.

- Best role: cosmetic procedure authority and intent-aligned trust.

- What matters most: claimed doctor profile, specialty accuracy, board-certification alignment, Q&A participation, review quality, and before/after content.

- Best fit: critical for plastic surgeons and aesthetic practices; much weaker for general legal or non-aesthetic categories.

9. Zocdoc

Zocdoc is strongest where booking intent matters. It is not just a profile directory; it is an operational discovery and scheduling layer. Zocdoc’s verified review documentation and Patient Choice criteria make clear that bookings, reviews, and reliability are central to the platform.

- Best role: appointment-ready discovery for medical and dental practices.

- What matters most: availability reliability, insurance accuracy, specialty match, verified review volume, and booking readiness.

- Best fit: high for cosmetic dentists and medical clinics, selective for plastic surgery, irrelevant for PI lawyers.

10. Legal Reputation Stack: Avvo, Super Lawyers, Justia, FindLaw

For PI and consumer legal, this is the legal equivalent of the medical directory stack. Each platform contributes a different piece of trust.

- Avvo: ratings, reviews, profile depth, and Q&A visibility.

- Super Lawyers: selection-based recognition and status signaling.

- Justia: profiles plus Ask A Lawyer visibility.

- FindLaw: high-traffic directory exposure and professional profile presence.

What matters most: claimed and complete profiles, exact practice areas, bar admissions, awards, office locations, bios, and consistent case-result framing where allowed.

Mini-playbook: start with the profiles most likely to be cited in consumer recommendation prompts, make practice areas and jurisdictions painfully explicit, then standardize attorney bios and recognition language across the stack.

11. YouTube

YouTube is one of the few universally strong off-site surfaces across models. It blends authority, demonstration, and explanation in a way that maps cleanly to AI answers. The strongest public support here comes from Surfer’s AI citation report, Semrush’s most-cited domains study, and AirOps’ community and UGC report.

- Best role: procedure walkthroughs, visual explanations, expert commentary, and non-branded educational discovery.

- What matters most: query-matching titles, spoken clarity, transcripts, descriptions, chapters, and visible expert authority.

- Best fit: high for plastic surgeons and cosmetic dentists; still useful for legal education and general expertise.

12. Reddit

Reddit matters, but more selectively than early hype suggested. It is strongest in prompts that ask what real people think, compare options, or surface community consensus. The best current public references are AirOps, Profound, and Semrush.

- Best role: discovery, comparisons, community validation, category education.

- Weak use case: regulated practitioner selection, where directories and reviews matter more.

- Agency stance: use real expertise and real accounts, draft helpful answers, and avoid covert posting schemes.

13. LinkedIn

LinkedIn is materially stronger than many GEO takes assume, especially for expert-led, founder-led, and B2B visibility. The strongest current source here is Semrush’s LinkedIn AI visibility study, which found LinkedIn materially more present in AI answers than many marketers expected.

- Best role: professional authority and semantic reinforcement around people, companies, and expertise.

- What matters most: complete company pages, strong personal profiles, original posts, expertise framing, and consistent company-category language.

- Best fit: agencies, consultants, professional services, and experts. Weaker for pure local consumer recommendation prompts.

14. Quora

Quora is not dead, but it is not a universal priority. It still matters where there is genuine question-led demand and direct-answer content fits the query format. The clearest recent source here is Semrush’s Quora and Google AI Mode research.

- Best role: informational and problem-aware Q&A capture.

- What matters most: direct answers, non-spam tone, topic fit, and genuine question demand.

- Best fit: selective tests, not default retainer scope.

15. Wikipedia & Wikidata

Wikipedia and Wikidata still matter as reference and entity graph layers, but mainly for notable entities, not ordinary local businesses. The broadest citation support for that still comes from cross-model domain studies like Semrush’s most-cited domains analysis.

- Best role: reference authority and entity graph reinforcement.

- What matters most: notability, independent coverage, stable factual identity, and existing entity footprint.

- Practical read: high value for notable brands or people, but not realistic as a primary play for most local service businesses.

Vertical Off-Site Stacks

Use this as an audit order: map the vertical stack you should own, score your current footprint, then work from profiles and reviews upward into editorial and media. That sequence is much more reliable than starting with Reddit or random PR.

Need a done-for-you citation-source audit? We can turn this stack into a prioritized gap report and monthly execution queue.

How to Turn This Into an Execution Plan

If you want to operationalize this instead of just reading it, do not turn it into a vague “more off-site SEO” project. Turn it into a five-layer execution system with a clear order of work.

- Entity layer: GBP, Apple Maps, Bing Places, core name-category-description consistency.

- Review and directory layer: Yelp or vertical review surfaces such as Healthgrades, RealSelf, Zocdoc, or legal directories.

- Credential layer: licensing, board certification, associations, affiliations.

- Editorial layer: local press, best-of listicles, city guides, niche publisher coverage.

- Media and community layer: YouTube, LinkedIn, Reddit, Quora.

Hard conclusion: for local service brands, maps + reviews + vertical directories + editorial proof beat generic social in commercial recommendation prompts.

How AI Agents Can Do This for You

Agents are useful here because off-site AI visibility is really a multi-surface operating system. Each surface has its own audit, copy, checklist, and monitoring loop. That work is repetitive, data-heavy, and easy to split into lanes.

In practice, an agent workflow can do all of this:

- Audit your off-site footprint: inventory every profile, directory, review surface, social surface, and missing listing by vertical.

- Compare competitors: build side-by-side visibility maps for top local competitors and identify which surfaces they own that you do not.

- Normalize entity data: draft consistent name, description, specialty, category, and service copy for GBP, Apple Maps, Bing Places, LinkedIn, directories, and bios.

- Create profile optimization queues: turn each platform into a field-by-field punch list instead of vague strategy talk.

- Build review and reputation workflows: draft review request templates, review response templates, and monthly review-health reports.

- Generate PR and listicle targets: compile city-specific publishers, best-of pages, local magazines, and niche editors with pitch angles.

- Repurpose one asset into many surfaces: turn a strong source document into YouTube scripts, LinkedIn posts, Reddit answers, Quora drafts, profile blurbs, and media talking points.

- Monitor change over time: track new mentions, review velocity, profile completeness, lost listings, and competitor movement weekly or monthly.

What agents should not do unsupervised:

- make regulated claims for medical or legal businesses

- buy or operate fake accounts

- post deceptive community content

- send outreach without approval

- change profile facts without a source of truth

The practical model is: agents do the research, drafting, organization, QA, and monitoring; humans handle approvals, accounts, relationships, and compliance-sensitive actions.

FAQ

What are AI citation sources?

AI citation sources are the third-party platforms, pages, and profiles AI systems pull from, trust, or use to validate recommendations. In practice, that usually means maps, reviews, directories, editorial coverage, YouTube, and selective community platforms.

Is this the same as link building?

No. Link building is about authority and referral pathways between websites. AI citation-source work is about showing up on the third-party surfaces AI systems actually reference when they answer recommendation and comparison queries.

Which off-site platforms matter most for local service businesses?

Usually Google Business Profile first, then reviews and vertical directories, then editorial and credential sources, then YouTube, and finally selective community platforms like Reddit and LinkedIn. The exact order changes by vertical.

How long do off-site changes take to affect AI citations?

There is no single timing rule. Profile fixes, review changes, and fresh editorial mentions can matter faster than broad reputation shifts, but you should expect a rolling effect over weeks to months rather than an instant jump.

Do Reddit and YouTube matter more than reviews and directories?

Not usually for local commercial recommendation prompts. Reddit and YouTube can be very strong, but reviews, directories, and credential sources usually carry more weight in practitioner-selection and local trust queries.

How can AI agents help with AI citation-source work?

Agents are useful for the research and operations layer: auditing profiles, comparing competitors, drafting normalized profile copy, building review and directory checklists, creating PR target lists, and monitoring changes over time. Humans still need to approve claims, manage outreach, handle accounts, and make compliance-sensitive decisions.

How should I use this page with the rest of your content?

Use this page when you want the off-site citation-source map. Use how to get cited by AI for the broader framework, what is AI visibility for the KPI, and how to appear in AI search for the cross-platform playbook.

Sources

This page is based on our internal March 27, 2026 research pass on AI citation sources, which drew from 15 off-site surface types, 3 target vertical stacks, platform docs, public studies, and earlier repo research. Key sources include:

- Google Search documentation on AI features

- Google guidance on succeeding in AI search

- Google guidance on establishing business details

- Apple Business Connect

- Microsoft on the new Bing Places

- Healthgrades methodologies

- RealSelf doctor FAQs

- Zocdoc verified reviews

- Avvo rating documentation

- Justia lawyer directory listings

- AirOps community and UGC in AI search

- Semrush most-cited domains in AI

- Semrush LinkedIn AI visibility study

- Semrush Quora and Google AI Mode research

- Surfer AI citation report

- Profound on Reddit and AI search